Our mission is simple – to empower everyone to unleash the full potential of AI.

Xinference started as an open source project built by practitioners frustrated with the status quo. Serving large AI models was too slow, too rigid, and too expensive. So we built something better: a unified inference platform that lets you deploy any model, on any hardware, anywhere.

The project has become widely adopted globally — with over 9.3k+ GitHub stars, 6 million downloads, and a global community of developers who use it to power everything from research prototypes to production systems at scale.

Today we are a humbly Australian owned business with a global reach. Our team of ML infrastructure engineers and researchers are headquartered across Australia, Singapore, and broader Asia. We operate at the intersection of open source and enterprise: everything we build is grounded in the community and hardened for the demands of real-world Enterprise deployment.

Stay up to date on the future of AI

Connect with the Xinference team and community at conferences, webinars, and local meetups across Asia-Pacific and beyond. Hear directly from the engineers building the platform.

View all events →Get the latest on new model support, performance improvements, enterprise features, and partnership announcements. Be the first to know what's coming next.

Read the blog →At Xinference, you'll work on critical infrastructure that hundreds of enterprises depend on every day. We move quickly, we think carefully, and we care deeply about our place in the future of AI.

We're distributed across Australia, Singapore, and broader Asia — join us today!

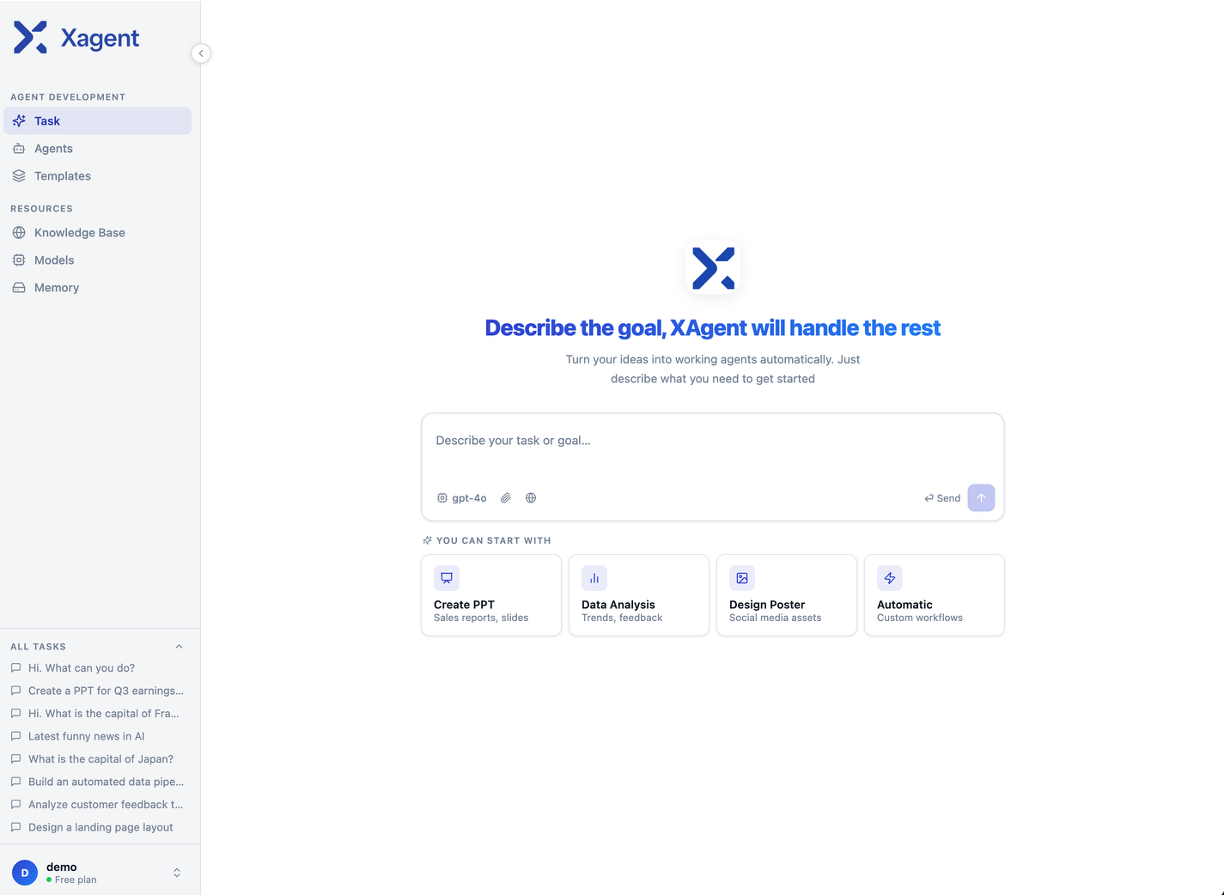

See all open rolesBuild enterprise grade agents just by typing what you want.

Everything you need to know about Xinference and how it fits into your AI stack.

Xinference is an open-source platform that lets you deploy and serve large language models, embedding models, image models, and more — all through a unified API. It abstracts away the complexity of model loading, hardware management, and scaling so your team can focus on building applications.

Cloud providers charge you for every token processed through their managed AI services, and your data passes through their infrastructure. With Xinference, you deploy models on your own infrastructure — cloud, on-prem, or hybrid.

Xinference is a unified, production-ready inference platform giving you full control over which models to run, which GPU to use, and where to deploy; all while ensuring best-in-class performance and cost optimisation.

Pricing is based on the number of nodes per cluster. Xinference Enterprise costs US$15k per node per cluster.

For example, a small deployment of 2 nodes (usually ~16 GPUs) would cost US$30k / annum. A larger deployment of 250 nodes (usually ~2,000 GPUs) would cost US$3.8m / annum. Running multiple clusters would mean multi billing per cluster.

Xinference Enterprise delivers better performance and enterprise-grade reliability. Our customers pick the Enterprise solution as it delivers comprehensive hardware compatibility, enables running multiple models on a single GPU, and super charges performance with up to 2x greater throughput.

Most importantly, Xinference Enterprise comes with critical enterprise management features like RBAC, audit logs, a unified management console and SLA guarantees.

With Xinference, you can choose to run your models on your own infrastructure — cloud or on-premises — so your prompts and data never leave your environment. This makes Xinference purpose-built for industries with strict data requirements like finance and healthcare.

Xinference provides a RESTful API compatible with OpenAI's protocol, meaning any tool already built around OpenAI's API works with Xinference by changing a single line of code. Xinference integrates with popular third-party libraries including LangChain, LlamaIndex, Dify, and Chatbox. Kubernetes deployment via Helm is also supported for teams running containerised infrastructure.